About Spinsy Casino Australia Review Methodology

How this Spinsy Casino Australia review is researched and updated for Australian players, with practical checks for payouts, bonus terms, support, and safety.

🔎 Editorial method and source weighting

🧱 Editorial method and source weighting - framework

This page explains how the review itself is produced. I do not rank features by headline size first; I weight process reliability, payment consistency, and support clarity. Every claim in the final score must link to a timestamped checkpoint in my notes. If a point cannot be reproduced, it does not survive the edit. That rule keeps the review useful for real players and not just polished for search snippets.

Integrity also means publishing limits of confidence. If a behavior is inconsistent, I mark it as conditional rather than definitive. Responsible gaming controls are included in methodology because outcome quality is tied to user discipline. A platform can look stable in ideal use and unstable under impulsive play. Method notes must capture both contexts to be honest.

✅ Editorial method and source weighting - action steps

Reproducibility is the backbone of methodology. I rerun account actions in separate windows, compare outcomes, and only then publish a verdict. One smooth attempt is never enough evidence. I also log where friction appears: onboarding, verification, cashier queue, or escalation. This lets readers see not just what happened, but where problems tend to emerge in sequence.

Integrity also means publishing limits of confidence. If a behavior is inconsistent, I mark it as conditional rather than definitive. Responsible gaming controls are included in methodology because outcome quality is tied to user discipline. A platform can look stable in ideal use and unstable under impulsive play. Method notes must capture both contexts to be honest.

I close each review cycle with a correction pass. If later checks contradict earlier notes, the page is updated and wording is tightened. That ongoing maintenance is slower, but it prevents stale claims from lingering.

For this operator, I map this specific area against live complaint patterns and support transcripts, then compare those notes with formal terms. When balance pressure rises, generic advice fails fast. That is why each subsection includes actionable sequencing for 'editorial method and source weighting': what to verify first, what evidence to collect, and how to escalate without fragmenting the case.

Human rule here: pre-commit your next action before emotions spike. For this topic, focus on how evidence is weighted before any score is published and cross-checking casino claims against observed platform behavior. In Australian player practice, clear chronology usually beats aggressive tone. If a thread becomes messy, pause, consolidate, and re-open with one clean reference chain.

🧾 How sessions are reproduced before scoring

🧱 How sessions are reproduced before scoring - framework

This page explains how the review itself is produced. I do not rank features by headline size first; I weight process reliability, payment consistency, and support clarity. Every claim in the final score must link to a timestamped checkpoint in my notes. If a point cannot be reproduced, it does not survive the edit. That rule keeps the review useful for real players and not just polished for search snippets.

Integrity also means publishing limits of confidence. If a behavior is inconsistent, I mark it as conditional rather than definitive. Responsible gaming controls are included in methodology because outcome quality is tied to user discipline. A platform can look stable in ideal use and unstable under impulsive play. Method notes must capture both contexts to be honest.

✅ How sessions are reproduced before scoring - action steps

Reproducibility is the backbone of methodology. I rerun account actions in separate windows, compare outcomes, and only then publish a verdict. One smooth attempt is never enough evidence. I also log where friction appears: onboarding, verification, cashier queue, or escalation. This lets readers see not just what happened, but where problems tend to emerge in sequence.

Integrity also means publishing limits of confidence. If a behavior is inconsistent, I mark it as conditional rather than definitive. Responsible gaming controls are included in methodology because outcome quality is tied to user discipline. A platform can look stable in ideal use and unstable under impulsive play. Method notes must capture both contexts to be honest.

I close each review cycle with a correction pass. If later checks contradict earlier notes, the page is updated and wording is tightened. That ongoing maintenance is slower, but it prevents stale claims from lingering.

For this operator, I map this specific area against live complaint patterns and support transcripts, then compare those notes with formal terms. When balance pressure rises, generic advice fails fast. That is why each subsection includes actionable sequencing for 'how sessions are reproduced before scoring': what to verify first, what evidence to collect, and how to escalate without fragmenting the case.

Human rule here: pre-commit your next action before emotions spike. For this topic, focus on replication steps for signup, bonus activation, and withdrawal requests and what changed between first and second test cycles. In Australian player practice, clear chronology usually beats aggressive tone. If a thread becomes messy, pause, consolidate, and re-open with one clean reference chain.

🧠 Cashier and support verification process

🧱 Cashier and support verification process - framework

This page explains how the review itself is produced. I do not rank features by headline size first; I weight process reliability, payment consistency, and support clarity. Every claim in the final score must link to a timestamped checkpoint in my notes. If a point cannot be reproduced, it does not survive the edit. That rule keeps the review useful for real players and not just polished for search snippets.

Integrity also means publishing limits of confidence. If a behavior is inconsistent, I mark it as conditional rather than definitive. Responsible gaming controls are included in methodology because outcome quality is tied to user discipline. A platform can look stable in ideal use and unstable under impulsive play. Method notes must capture both contexts to be honest.

✅ Cashier and support verification process - action steps

Reproducibility is the backbone of methodology. I rerun account actions in separate windows, compare outcomes, and only then publish a verdict. One smooth attempt is never enough evidence. I also log where friction appears: onboarding, verification, cashier queue, or escalation. This lets readers see not just what happened, but where problems tend to emerge in sequence.

Integrity also means publishing limits of confidence. If a behavior is inconsistent, I mark it as conditional rather than definitive. Responsible gaming controls are included in methodology because outcome quality is tied to user discipline. A platform can look stable in ideal use and unstable under impulsive play. Method notes must capture both contexts to be honest.

I close each review cycle with a correction pass. If later checks contradict earlier notes, the page is updated and wording is tightened. That ongoing maintenance is slower, but it prevents stale claims from lingering.

For this operator, I map this specific area against live complaint patterns and support transcripts, then compare those notes with formal terms. When balance pressure rises, generic advice fails fast. That is why each subsection includes actionable sequencing for 'cashier and support verification process': what to verify first, what evidence to collect, and how to escalate without fragmenting the case.

Human rule here: pre-commit your next action before emotions spike. For this topic, focus on how support quality is graded under normal and stressed scenarios and which timestamps matter most in a payout dispute. In Australian player practice, clear chronology usually beats aggressive tone. If a thread becomes messy, pause, consolidate, and re-open with one clean reference chain.

🛟 How risk controls shape final recommendations

🧱 How risk controls shape final recommendations - framework

This page explains how the review itself is produced. I do not rank features by headline size first; I weight process reliability, payment consistency, and support clarity. Every claim in the final score must link to a timestamped checkpoint in my notes. If a point cannot be reproduced, it does not survive the edit. That rule keeps the review useful for real players and not just polished for search snippets.

Integrity also means publishing limits of confidence. If a behavior is inconsistent, I mark it as conditional rather than definitive. Responsible gaming controls are included in methodology because outcome quality is tied to user discipline. A platform can look stable in ideal use and unstable under impulsive play. Method notes must capture both contexts to be honest.

✅ How risk controls shape final recommendations - action steps

Reproducibility is the backbone of methodology. I rerun account actions in separate windows, compare outcomes, and only then publish a verdict. One smooth attempt is never enough evidence. I also log where friction appears: onboarding, verification, cashier queue, or escalation. This lets readers see not just what happened, but where problems tend to emerge in sequence.

Integrity also means publishing limits of confidence. If a behavior is inconsistent, I mark it as conditional rather than definitive. Responsible gaming controls are included in methodology because outcome quality is tied to user discipline. A platform can look stable in ideal use and unstable under impulsive play. Method notes must capture both contexts to be honest.

I close each review cycle with a correction pass. If later checks contradict earlier notes, the page is updated and wording is tightened. That ongoing maintenance is slower, but it prevents stale claims from lingering.

For this operator, I map this specific area against live complaint patterns and support transcripts, then compare those notes with formal terms. When balance pressure rises, generic advice fails fast. That is why each subsection includes actionable sequencing for 'how risk controls shape final recommendations': what to verify first, what evidence to collect, and how to escalate without fragmenting the case.

Human rule here: pre-commit your next action before emotions spike. For this topic, focus on how stop-loss and session limits affect the final verdict and why discipline quality changes practical outcomes. In Australian player practice, clear chronology usually beats aggressive tone. If a thread becomes messy, pause, consolidate, and re-open with one clean reference chain.

⚙️ What triggers a score update

🧱 What triggers a score update - framework

This page explains how the review itself is produced. I do not rank features by headline size first; I weight process reliability, payment consistency, and support clarity. Every claim in the final score must link to a timestamped checkpoint in my notes. If a point cannot be reproduced, it does not survive the edit. That rule keeps the review useful for real players and not just polished for search snippets.

Integrity also means publishing limits of confidence. If a behavior is inconsistent, I mark it as conditional rather than definitive. Responsible gaming controls are included in methodology because outcome quality is tied to user discipline. A platform can look stable in ideal use and unstable under impulsive play. Method notes must capture both contexts to be honest.

✅ What triggers a score update - action steps

Reproducibility is the backbone of methodology. I rerun account actions in separate windows, compare outcomes, and only then publish a verdict. One smooth attempt is never enough evidence. I also log where friction appears: onboarding, verification, cashier queue, or escalation. This lets readers see not just what happened, but where problems tend to emerge in sequence.

Integrity also means publishing limits of confidence. If a behavior is inconsistent, I mark it as conditional rather than definitive. Responsible gaming controls are included in methodology because outcome quality is tied to user discipline. A platform can look stable in ideal use and unstable under impulsive play. Method notes must capture both contexts to be honest.

I close each review cycle with a correction pass. If later checks contradict earlier notes, the page is updated and wording is tightened. That ongoing maintenance is slower, but it prevents stale claims from lingering.

For this operator, I map this specific area against live complaint patterns and support transcripts, then compare those notes with formal terms. When balance pressure rises, generic advice fails fast. That is why each subsection includes actionable sequencing for 'what triggers a score update': what to verify first, what evidence to collect, and how to escalate without fragmenting the case.

Human rule here: pre-commit your next action before emotions spike. For this topic, focus on which events force an editorial score revision and how contradictory signals are documented. In Australian player practice, clear chronology usually beats aggressive tone. If a thread becomes messy, pause, consolidate, and re-open with one clean reference chain.

📌 Publication integrity and correction policy

🧱 Publication integrity and correction policy - framework

This page explains how the review itself is produced. I do not rank features by headline size first; I weight process reliability, payment consistency, and support clarity. Every claim in the final score must link to a timestamped checkpoint in my notes. If a point cannot be reproduced, it does not survive the edit. That rule keeps the review useful for real players and not just polished for search snippets.

Integrity also means publishing limits of confidence. If a behavior is inconsistent, I mark it as conditional rather than definitive. Responsible gaming controls are included in methodology because outcome quality is tied to user discipline. A platform can look stable in ideal use and unstable under impulsive play. Method notes must capture both contexts to be honest.

✅ Publication integrity and correction policy - action steps

Reproducibility is the backbone of methodology. I rerun account actions in separate windows, compare outcomes, and only then publish a verdict. One smooth attempt is never enough evidence. I also log where friction appears: onboarding, verification, cashier queue, or escalation. This lets readers see not just what happened, but where problems tend to emerge in sequence.

Integrity also means publishing limits of confidence. If a behavior is inconsistent, I mark it as conditional rather than definitive. Responsible gaming controls are included in methodology because outcome quality is tied to user discipline. A platform can look stable in ideal use and unstable under impulsive play. Method notes must capture both contexts to be honest.

I close each review cycle with a correction pass. If later checks contradict earlier notes, the page is updated and wording is tightened. That ongoing maintenance is slower, but it prevents stale claims from lingering.

For this operator, I map this specific area against live complaint patterns and support transcripts, then compare those notes with formal terms. When balance pressure rises, generic advice fails fast. That is why each subsection includes actionable sequencing for 'publication integrity and correction policy': what to verify first, what evidence to collect, and how to escalate without fragmenting the case.

Human rule here: pre-commit your next action before emotions spike. For this topic, focus on how corrections are published and dated and when old claims are rewritten or removed. In Australian player practice, clear chronology usually beats aggressive tone. If a thread becomes messy, pause, consolidate, and re-open with one clean reference chain.

🧭 Operational recap before your next session

Method recap: evidence before opinion, repeat tests before conclusions, and clear uncertainty labels when signals conflict.

I close each review cycle with a correction pass. If later checks contradict earlier notes, the page is updated and wording is tightened. That ongoing maintenance is slower, but it prevents stale claims from lingering.

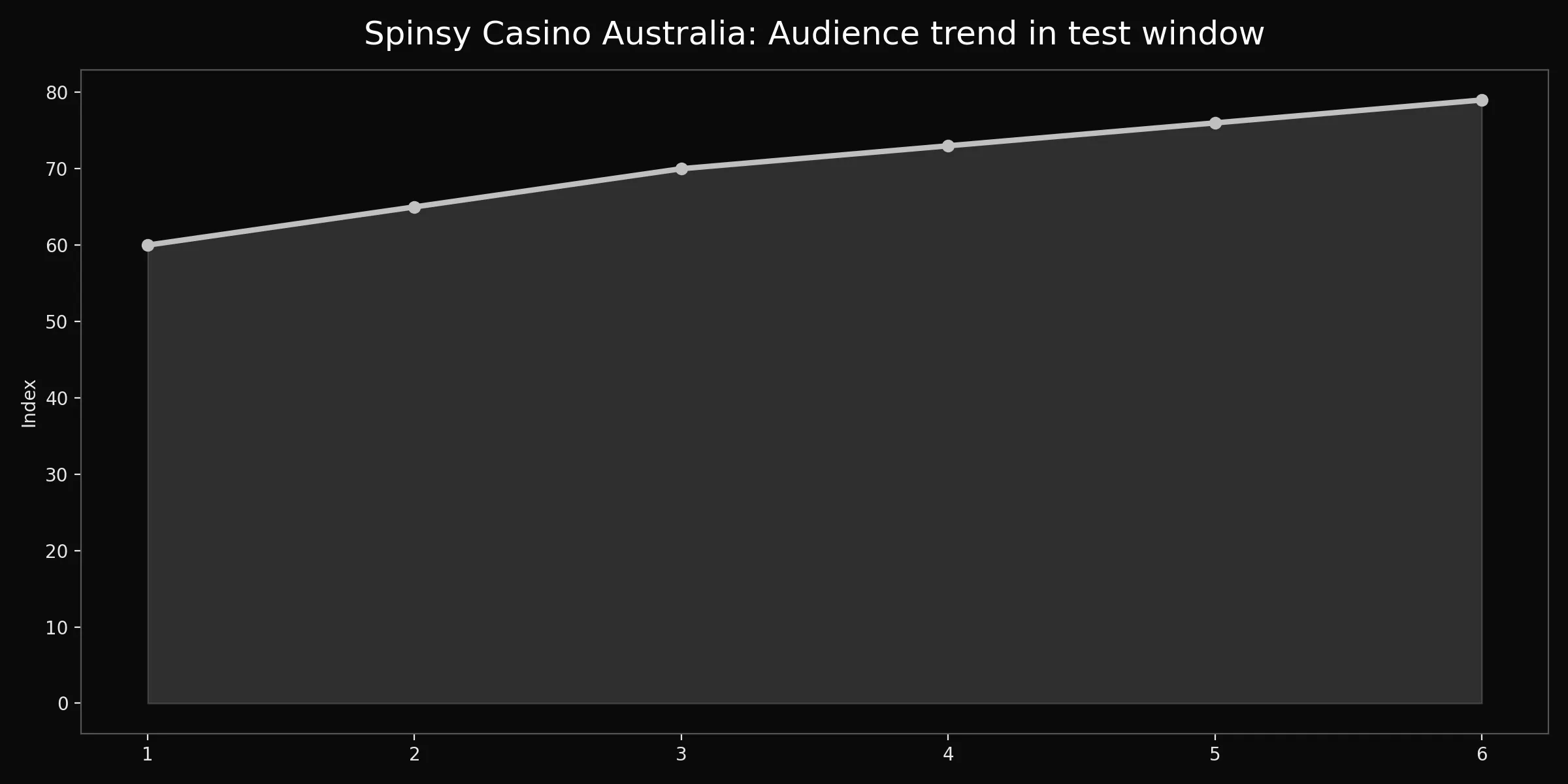

Brand chart for this page

This methodology chart is a calibration lens, not a trophy image. Read trend movement as evidence quality over repeated checks, then compare it with documented corrections. When the signal rises, the process usually became stricter, not louder. When the signal drops, confidence is reduced and wording should be conservative. Use this graphic to understand editorial discipline before trusting any final score.